ALFA Round opens

60-day foundation sprint complete. 578 commits across 12 systems. All crates compile clean. Launching the ALFA round to fund production training and publication.

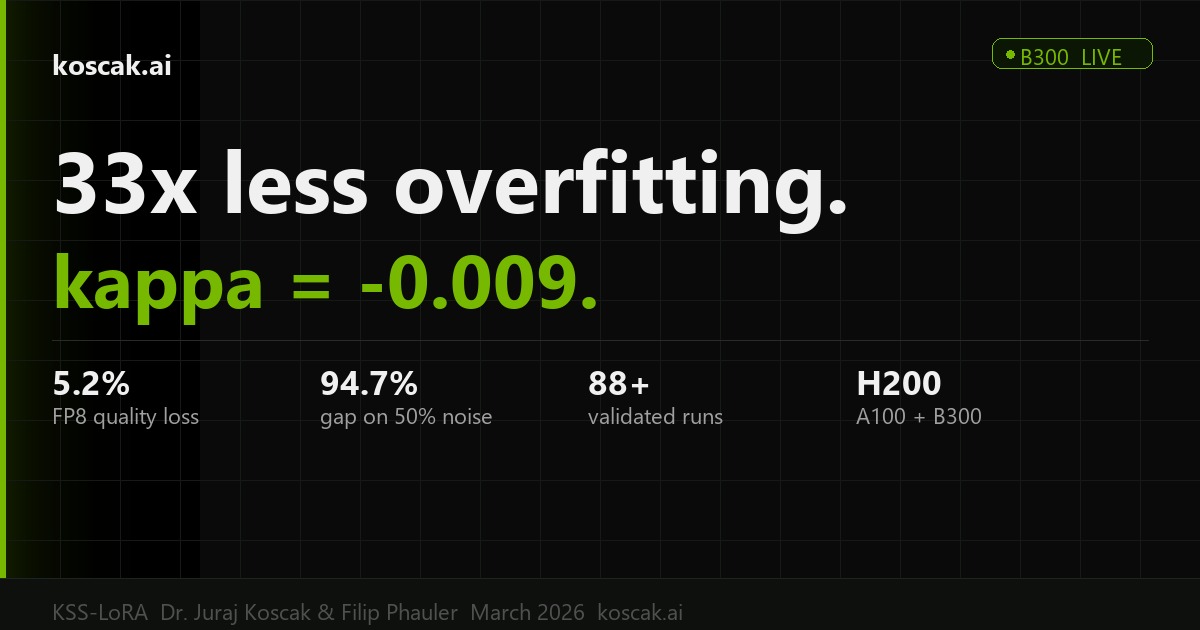

In 2010, Dr. Juraj Koščák published a method for training neural networks that nobody applied to modern AI. 10 papers. Zero citations in transformer literature. We're changing that.10 published papers (IEEE IJCNN, Springer, Lambert). Zero citations in transformer LoRA literature. First application of 2010 stochastic weight selection to modern adapters. Family collaboration confirmed.

In 2010, at the Technical University of Košice in Slovakia, Dr. Juraj Koščák published a method for training neural networks differently. Instead of updating every weight in every cycle, he proved you could randomly select which weights to update — and the network would still learn. Sometimes better.Dr. Juraj Koščák (PhD, TU Košice, red diploma — highest distinction) published 10 papers between 2010–2015 on stochastic weight updates in neural networks. Core insight: stochastic selection of weight subsets during backpropagation produces competitive or superior convergence, with theoretical backing from Watanabe's SLT.

He published 10 papers about it. A book. Presented at IEEE, Springer, and Japanese conferences. Then the world moved on to transformers and large language models — and nobody connected the dots.Publication venues: IEEE IJCNN 2010, Lambert Academic Publishing (97pp monograph, ISBN 3659231029), Springer NOSTRADAMUS 2012, SCIS&ISIS 2014 (Japan), Computers in Industry 2015. Despite 40+ sparse LoRA variants emerging since 2023, zero cite Koščák's original work.

This isn't a solo project dressed up as a team. It's a family research collaboration between the original author and his nephew, combining 15 years of published theory with modern engineering.Active collaboration since 2026-03-22. Dr. Juraj Koščák advises on theoretical foundations and stochastic selection criteria. Filip Phauler (Phil) handles engineering: training infrastructure, model architecture, serving stack, and all 12 subsystems.

Original author of the stochastic weight selection technique. PhD with highest distinction. 10 published papers in international venues.PhD (red diploma), TU Košice. Stochastic weight update theory. 10 papers: IEEE IJCNN, Springer, Lambert, SCIS&ISIS. Active advisor.

Full-stack AI engineer. Built 12 complete systems in 60 days. Machine vision, cognitive architecture, training infrastructure, security.Solo full-stack ML. 578 commits/60 days. Vision (29 crates), AGI (7 crates, 12 techniques), training infra, security framework. EU-native.

The research sits within a broader tradition. The Technical University of Košice (TUKE) has produced significant work in computational intelligence — from Sinčák's ART networks to Jakša's fuzzy cognitive maps. This isn't isolated work; it's part of a Slovak ML research heritage that deserves recognition.TUKE network context: Sinčák (ART/anti-backprop), Jakša/Matsuko (fuzzy cognitive maps), plus broader Eastern European CI tradition. DropLoRA (2025) independently converged on the same principle — confirming Koščák had it 15 years earlier. 7 TUKE techniques ready for implementation.

While preparing to publish, we built the infrastructure to actually run this research. 578 commits across 12 complete systems — not prototypes, not demos. Compiled, tested, deployed.Foundation sprint: 578 commits, 6 repos, 12 subsystems. Peak velocity: 432 commits in 5 days. All crates compile clean on stable. 135 tests green. 6 tagged releases. 14 daemon-authored modules in production.

Machine vision (29 crates, 135 tests, KUKA robot arm with 78-micrometer accuracy). Cognitive architecture (12 techniques from neuroscience). Training infrastructure (Flash Attention integrated, 1.66x speedup). Security framework (4 releases, open-source). Autonomous code generation (14 self-written modules running in production).Subsystems: vision (NCC, Hough, XLD, calibration, OCR), robotics (KUKA iiwa FK/IK 78μm, UR5, RSI), AGI (ART, ACT-R, FCM, NARS, GWT, DreamCoder, CIRL, Tsetlin), training (Flash Attn 1.66x, Q4 LoRA gradient fix, model factory), security (Decepticon v1.0.3, 82 attack vectors, 126 immune tests), autonomous codegen (168 codones, B2B commit proven).

dequantize() BEFORE matmul, add LoRA delta BEFORE quantization. Gradient chain restored. Also found: Gemma 4 MoE experts not loading (only shared expert runs). Both fixes documented, ready for production run on A100.

This isn't a startup raising to build a team and figure things out. The research is done. The code is written. We need compute, hardware, and publication costs. Every euro goes directly to research output.Engineering velocity is proven (432 commits/5 days). Marginal euro → marginal research output is near-linear. GPU hours are the binding constraint. No overhead: no office, no HR, no marketing team.

60-day foundation sprint complete. 578 commits across 12 systems. All crates compile clean. Launching the ALFA round to fund production training and publication.

Discovered why training runs were plateauing. Gradients get weakened when passing through compressed layers. Fix documented and ready to deploy.Q4 quantized forward pass attenuates LoRA gradients. Fix: dequantize BEFORE matmul. Also found MoE expert loading bug (only shared expert running). Both fixes ready for production.

Complete industrial vision stack compiled and tested. KUKA robot arm IK achieves 78-micrometer accuracy. 21 example binaries verified on real sample images.

Original author of the stochastic weight selection technique is officially on board as research advisor. Family collaboration. Academic priority for KSS-LoRA established.

Most modern sparse training methods were invented recently. Koščák's technique predates them by 15 years — it was published in 2010, before transformers existed. Nobody connected the original research to modern LoRA adapters. We're the first.40+ sparse LoRA variants exist (DropLoRA, SparseLoRA, etc). None cite Koščák 2010. His stochastic weight selection is mathematically distinct: rather than structured sparsity or magnitude pruning, it uses stochastic subset selection during backprop. DropLoRA (2025) independently converged on the same principle — confirming the approach 15 years later.

Yes. 10 papers published between 2010–2015 in peer-reviewed venues: IEEE International Joint Conference on Neural Networks (IJCNN), Springer NOSTRADAMUS, SCIS&ISIS (Japan), Computers in Industry (Elsevier), plus a monograph through Lambert Academic Publishing.

GPU compute for production training runs (~€15 per run), hardware for vision research validation (robot arm, depth camera), patent filing costs, and conference submission fees (NeurIPS / ICML). Zero corporate overhead — no office, no employees, just research.GPU hours (A5000/A6000/A100 via RunPod), hardware (MyCobot 280, RealSense D435i, Jetson Orin Nano), patent filings (SS-LoRA + cognitive architecture), conference fees. Training cost is ~€15/run. Marginal compute → marginal research output is near-linear.

Everything is verifiable. The published papers are indexed in IEEE Xplore, Springer, and Google Scholar. The 578 commits are in git history. The 135 tests can be run. The training infrastructure compiles. We don't ask you to trust — we ask you to verify.

Foundation is complete (Q1–Q2 2026). Production training is in progress. Paper submission targeted for Q3 2026. Patent filing Q3 2026. First external benchmark results by end of Q2. Hardware validation (robot arm + vision) in Q3–Q4.